It is difficult (if not impossible) to derive analytic solutions to many problems in economics. Economists must therefore rely on numerical methods to derive approximate solutions to these problems. For instance, consider solving the standard growth model in macroeconomics

We could solve this problem in many ways. One way would be to write the problem as a dynamic program and use approximate value function iteration. The researcher first discretizes the state space and then solves the for the value function at each point and interpolates for the value function in between points. This can be computationally expensive however and will not provide an analytic approximation. If we were interested in getting an analytic approximation instead, we could use the Homotopy Analysis Method proposed by Liao Shijun. While there is no guarantee that this method will be any less complex, it is definitely an interesting methodology and helped me experiment with Julia’s implementation of a Computer Algebra System (CAS).

Basic Mechanics

In the Homotopy Analysis Method, we create a homotopy between a solution that is easy to solve and the solution we are interested in. The homotopy will depend on a parameter such that when

we have the easy solution and when

we have the solution to the problem we want to solve. To illustrate this, consider the baby growth model. The first order conditions are given by

We are interested in policy functions for and

in terms of

. We could guess an initial solution

, i.e. consumer some constant fraction of output in each period, and then solve for the

that satisfies our first order condition. However, we in general don’t know the functional form of the solution and so would have to get lucky for this method to work. However, we could also start with this initial guess and then attempt to deform it until it approximates, or equals, the solution to our original problem. This is what the Homotopy Analysis Method does. Consider a new function,

, where

We then look for solutions to . When

, we get our easy solution back and when

we get the solution to our first order conditions. For every

there will be a solution, so we can index this family of solutions by

and write it as

. Here is the first jump in the Homotopy Analysis Method. We assume that the family of solutions has a Taylor series expansion that converges when

. So

can be written as

This assumption seems strong at first, but with a few adjustments can be made more reasonable. For the time being just note that implies that the solution to our original problem is

and when

our solution is given by

. It is worth mentioning that assuming $\phi(k;q)$ has a Taylor series expansion does not imply that any of the

have Taylor expansions. In fact, in the baby growth model we have that

does not have a Taylor series expansion around

. The higher order derivatives all diverge to

but, as we will see, we can still generate a close approximation. The question remains, how do we solve for

? When

, the answer is obvious. Consider the homotopy again (I am going to drop time subscripts now),

This looks very complex, but setting , we can immediately see that

. Because

by our Taylor series expansion, we have solved for

. For

, we can proceed as we do in deriving a standard Taylor series expansion; take derivatives of the above equation with respect to

and then set

. You can confirm that for

this will imply

Technically, you can proceed as above and write out the higher order terms. It will be a pain and you will make mistakes. Better to have a computer do it for you. Before we start doing this with Julia, I want to write out the generalized formulation of the Homotopy Analysis Method. Let be an arbitrary linear operator (such as the identity operator or differentiation),

an arbitrary constant, and

an arbitrary function of

(but importantly not a function of

). Then we can create the new homotopy

Adding in these additional pieces will not change the solution to our initial guess or the solution to our first order conditions. However, they offer flexibility that will help us ensure that our approximation will converge or improve the rate of convergence. In fact, we call the convergence control parameter because we can choose its value to ensure that our Taylor series converges when

. The role the other terms play will be made clearer below as we walk through a numeric example.

SymEngine / SymPy

The Homotopy Analysis Method relies on the researchers ability to take high order derivatives of potentially complex expressions. Doing this by hand is unfeasible, even for relatively simple problem like the baby growth model. Computer Algebra Systems can take care of the accounting for us, making this method simple, fast, and reliable. If you want a really powerful CAS, go learn Mathematica. I am more interested in exploring what Julia has to offer, so I tried SymEngine and Sympy. SymEngine is very fast while SymPy is more flexible, so which one you use really depends on your needs. I will give a brief overview of both methods.

Download both packages in the usual way. SymPy requires that you have Python installed and the Python package Sympy. It then uses PyCall to make SymPy available in Julia. For more information on installation, you can view their documentation here. SymEngine is just a wrapper for that C+ library and can simply be downloaded using Pkg.add.

using SymEngine using Base.Cartesian

SymEngine will treat symbols as variables and performs basic operations on them. Suppose we want to define the production function in baby growth model. First, we declare our variable , the capital stock. We define the constant

. Finally, we generate an expression for

.

k = symbols(:k) θ = 0.33 y = k^θ

k^0.33

We can perform any algebraic manipulations we want to the symbol and SymEngine will return a new symbol that we can then similarly manipulate. More importantly though (for our purposes), we can differentiate

with respect to

.

diff(y, k)

0.33*k^(-0.67)

We can even leave parameters undefined and perform these operations. For instance, rather than defining we can just leave it as a symbol as well.

k = symbols(:k) θ = symbols(:θ) y = k^θ diff(y,k)

k^(-1 + θ)*θ

When we want to evaluate for specific values of

and

, we use the “subs” function.

subs(y, subs(y, θ => 0.33))

k^0.33

We ultimately will want to evaluate these expressions as floats. However, if you substitute for both parameters above you will find an odd result.

val = subs(subs(y, θ => 0.33), k => 2.0)

1.25701337452183

typeof(val)

SymEngine.Basic

We get around this by using the function N(). This takes the SymEngine.Basic type and returns a Float64 when it can.

typeof(N(val))

Float64

Also, we can use the following shorthand for subs

subs(y, θ => 0.33) == y(θ => 0.33)

The syntax for SymPy is similar, but there more nuances that you need to get used to. First, to load SymPy you need to include the module with a macro

using PyCall @pyimport sympy

To declare a symbol, we use SymPy’s symbol function, which will return a PyCall.PyObject

q = sympy.Symbol("q")

x_0 = sympy.Symbol("x_0")

ϕ = x_0

@time @nexprs 10 j->(ϕ += x_j *q^j)

PyObject q**10*x_10 + q**9*x_9 + q**8*x_8 + q**7*x_7 + q**6*x_6 + q**5*x_5 + q**4*x_4 + q**3*x_3 + q**2*x_2 + q*x_1 + x_0

We can manipulate this object in the same way that we manipulated the SymEngine.Basic object. We can differentiate using sympy.diff. We can multiply by a constant or add two objects together. However, you should note that in the basic implementation of SymPy you cannot pre-multiply by a constant, only post-multiply. So if is a PyObject and

is a predefined constant, then

will return an error but

will not.

Substituting in values is accomplished by the following

y[:subs](k, 1.0)

PyObject 0.798317932252050

We can convert this to a float simply by piping, i.e.

y[:subs](k, 1.0) |> float

0.798317932252050

My preference for using SymPy comes from the fact that it can carry out pretty robust simplification. The derivatives we are dealing with are complex (lots of powers nested inside powers) but we will often be able to choose initial guesses and auxiliary functions to reduce the number of derivatives we need to cancel. In order to do this however, the CAS needs to be able to simplify an expression. SymEngine does not appear to do this yet, but SymPy does. If you want to use SymPy (and it is what I will be using) be sure to check out its rules for simplification. It will not perform simplifications unless they apply generally. You can read more about this here. This is enough to get us started, so back to the baby growth model.

Baby Growth Model

The first thing we need to do is parameterize the problem. is the discount factor,

is the capital share, and

is our initial guess at how much the agent consumes of their capital stock each period. Again,

is the convergence control parameter. I generally set it at

for most problems until I start fine tuning. More on that later.

β = 0.8 θ = 0.33 α = 0.6 h = -1.0

We need a quick way to create polynomials of an arbitrary degree. I like to use the Base.Cartesian package. With the macro @nexprs we can create multiple variables with the same prefix in one line of code. For instance,

q = symbols(:q) @nexprs 5 j->(x_j = symbols(:x_j)) x_0 = symbols(:x_0) ϕ = x_0 @nexprs 5 j->(ϕ += x_j *q^j)

x_0 + q*x_1 + q^2*x_2 + q^3*x_3 + q^4*x_4 + q^5*x_5

Everything here is a symbol except for the exponents. Ultimately, each of the will represent some function of

and our approximation up to

terms will just be

, i.e.

ϕ(q=>1)

x_0 + x_1 + x_2 + x_3 + x_4 + x_5

Our goal is to figure out what the functions are. We already know what

is from the discussion of theory above: the initial guess. The remaining

are easy to calculate using what is called the Homotopy Derivative

To see why this is useful, it helps to calculate the first few coefficients . To find

we can evaluate differentiate our homotopy with respect to

(for the time being ignore the convergence control parameter and the auxiliary function/linear operator)

Remember that , so setting

and

we get

or . So

is equal to the zeroth derivative of

evaluated at

. Taking the second derivative of our homotopy gives

Again, evaluating at and

, we have that

or

If you carried this out for higher order derivatives you would notice that for the process follows

Programming the Homotopy Analysis Method

function F′(express::SymEngine.Basic, n)

for i = 1:n

express = diff(express, q)

end

express

end

function x_n(n, x0, c0, Hk)

@eval begin

q = sympy.Symbol("q", positive = true)

ϕ = sympy.Symbol("x_0")

# Generate Polynomial

@nexprs $n j->(x_j = sympy.Symbol("x_j"))

@nexprs $n j->(ϕ += x_j * q^j)

ϕ = ϕ[:subs](x_0, $x0)

R_n = (k^θ + k*(1-δ) - ϕ)^(θ-1)*ϕ*β*θ - ϕ[:subs](k, k^θ + k*(1-δ) - ϕ)

x__0 = q*0

@nexprs $n j->( begin

x__j = sympy.simplify(x__{j-1} + $Hk*$c0*F′(R_n, j-1)[:subs](q, 0)/factorial(j-1))

ϕ = ϕ[:subs](x_j, x__j)

R_n = (k^θ + k*(1-δ) - ϕ)^(θ-1)*ϕ*β*θ - ϕ[:subs](k, k^θ + k*(1-δ) - ϕ)

end)

start = $x0

@nexprs $n j->(start += x__j )

sympy.simplify(start)

end

end

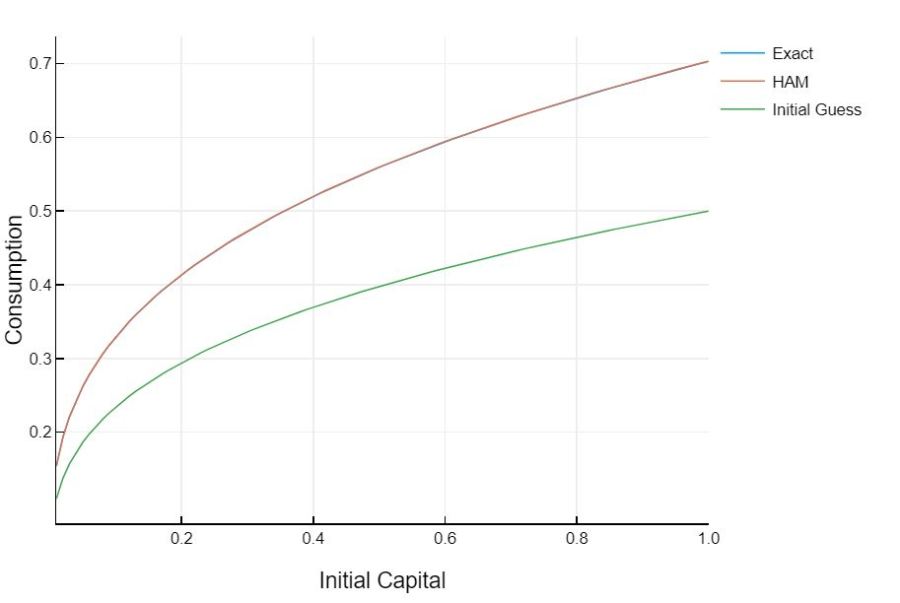

This is a special case where we know the exact solution to our problem. The solution to the baby growth model is .

Adding Depreciation

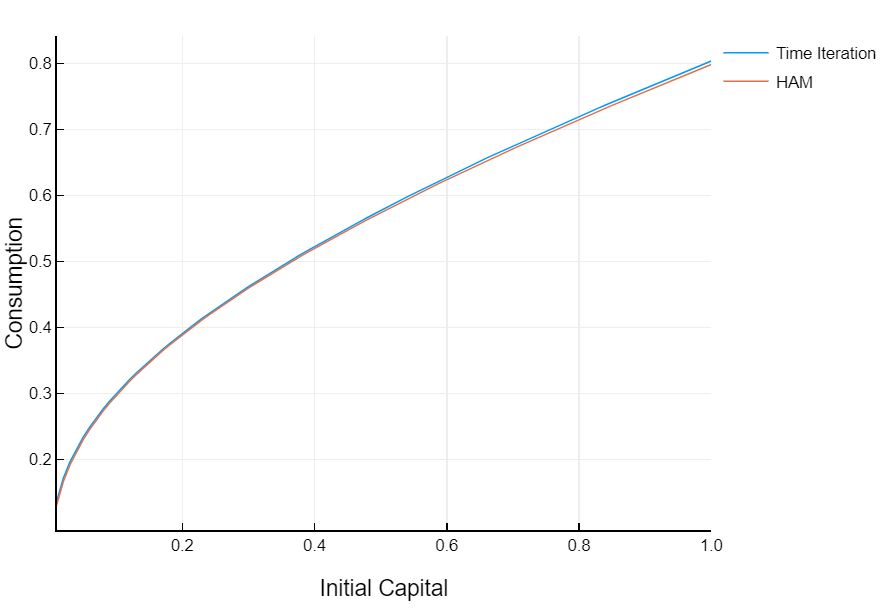

The baby growth model seemed a bit contrived. We already knew the functional form of the solution and so could make a good initial guess. If we add in depreciation however, we don’t have that same luxury. With depreciation, the optimal consumption rule will not be to consume a constant share of output. But that is okay. It will not materially impact our analysis or the good

It appears that this approximation is worse than the no depreciation case, but it is actually the time iteration curve that is incorrect. My time iteration code is only set up to solve a model with a non-inelastic supply of labor. Instead of solving a model with utility

I’m actually solving a model with utility

with set near zero. So the approximation using the Homotopy Analysis Method is actually more precise. Notice that our initial guess for the solution, which was consuming a constant share of capital, does not have the same functional form as the solution. The Homotopy Analysis Method is deforming our initial guess until it gives an approximate analytic solution. This is in contrast to the full depreciation case, where the solution had the same functional form as our guess. This just goes to show that the Homotopy Analysis Method can give very accurate approximations even when we don’t know what form the solution will take.

One point that we haven’t discussed in depth is how to choose the convergence control parameter. Our approximations were very accurate but depended heavily on this constant. In the full-depreciation example the convergence control parameter was set to but in the more general model we set it equal to

. This seems, and is, arbitrary. If we used higher order terms, so

etc. we would need to use a different convergence control parameter. If we had a different initial guess at

we would need to use a different parameter. If we used a different initial guess at the functional form we would need to use a different parameter. One of the strengths of HAM is that it is very flexible, but this makes it difficult to find generally applicable rules to use when choosing

,

, and

, almost to the point that the method isn’t very useful. Here are the heuristic rules that I use when solving problems.

- Ignore

unless derivatives appear in your nonlinear equation

- Choose an initial guess that conforms with the boundary conditions of your problem (not heuristic, this is just necessary) and, if possible, lead to separable terms in the nonlinear equation

- Choose

so that it eliminates the most terms in the nonlinear equation.

- Choose

so that the approximation evaluated at the steady-state returns the steady-state.

For , we want to choose

such that the nonlinear term is as simple as possible. In the growth model this resulted in consuming a constant fraction of output. The first nonlinear term, evaluated at

reduced to

Notice how much simpler this term is than if we guessed instead. Then we wouldn’t be able to work with just

but would need to carry around

in all of our calculations. Of course, with depreciation we will need to do this any ways, which brings us to point

. Using a good auxiliary function can reduce the number of terms in

that we need to calculate. By using

we just remove a term from entirely. This ultimately results in needing to calculate fewer derivatives for higher order approximations (remember that

depends on the number of terms in

, and so on). Finally, for point

, we generally are able to easily calculate what the steady state is in our problems. We can therefore use this information to find the right convergence control parameter. If our approximation is at all good it should return the steady state consumption when evaluated at the steady state capital. We can choose

to force this behavior at any level of approximation.